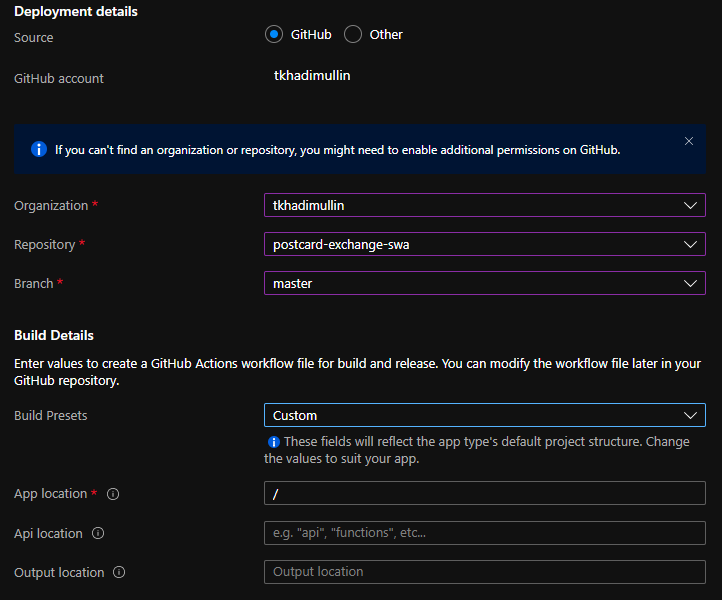

Despite Microsoft claims “First-class GitHub and Azure DevOps integration” with Static Web Apps, one is significantly easier to use than the other. Let’s take a quick look at how much features we’re giving up by sticking to Azure DevOps:

| GitHub | ADO | |

| Build/Deploy pipelines | Automatically adds pipeline definition to the repo | Requires manual pipeline setup |

| Azure Portal support | ✓ | ✗ |

| VS Code Extension | ✓ | ✗ |

| Staging environments and Pull Requests | ✓ | ✗ |

Looks like a lot of functionality is missing. This however begs the question whether we can do something about it?

Turns out we can…sort of

Looking a bit further into ADO build pipeline, we notice that Microsoft has published this task on GitHub. Bingo!

The process seems to run a single script that in turn runs a docker image, something like this:

...

docker run \

-e INPUT_AZURE_STATIC_WEB_APPS_API_TOKEN="$SWA_API_TOKEN" \

...

-v "$mount_dir:$workspace" \

mcr.microsoft.com/appsvc/staticappsclient:stable \

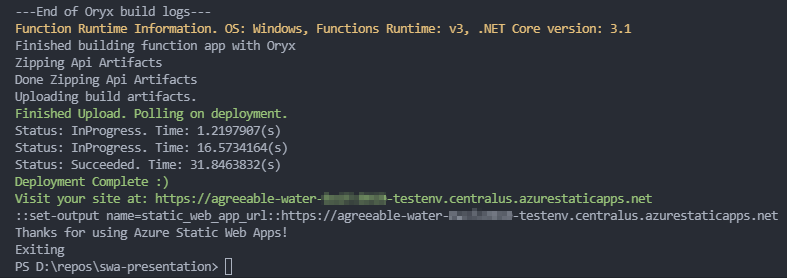

./bin/staticsites/StaticSitesClient uploadWhat exactly StaticSitesClient does is shrouded with mystery, but upon successful build (using Oryx) it creates two zip files: app.zip and api.zip. Then it uploads both to Blob storage and submits a request for ContentDistribution endpoint to pick the assets up.

It’s Docker – it runs anywhere

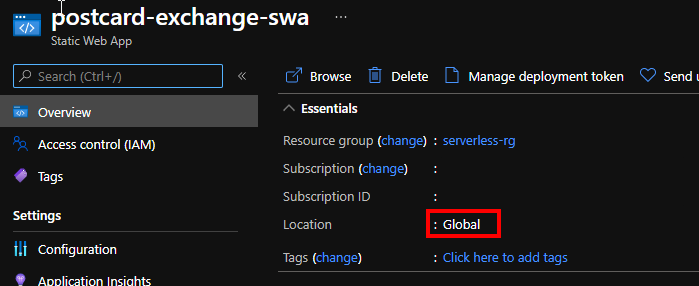

This image does not have to run at ADO or Github! We can indeed run this container locally and deploy without even committing the source code. All we need is a deployment token:

docker run -it --rm \

-e INPUT_AZURE_STATIC_WEB_APPS_API_TOKEN=<your_deployment_token>

-e DEPLOYMENT_PROVIDER=DevOps \

-e GITHUB_WORKSPACE="/working_dir"

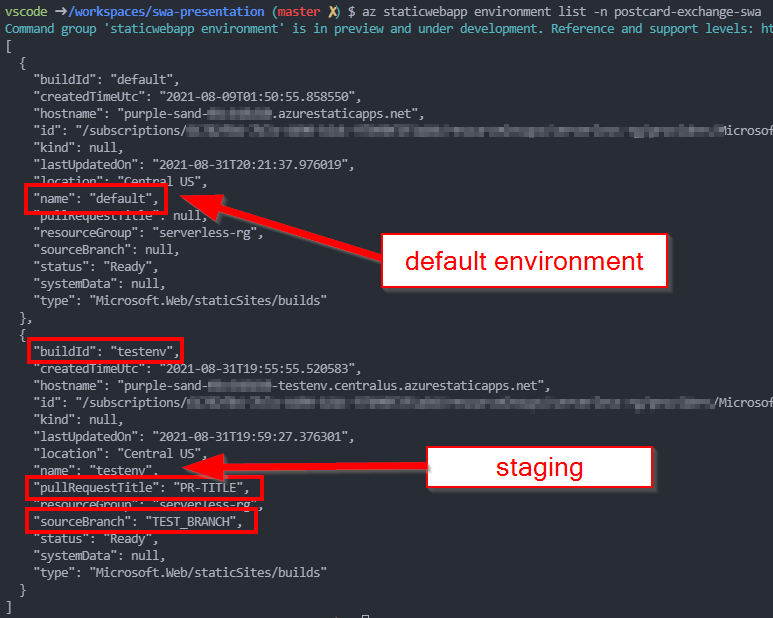

-e IS_PULL_REQUEST=true \

-e BRANCH="TEST_BRANCH" \

-e ENVIRONMENT_NAME="TESTENV" \

-e PULL_REQUEST_TITLE="PR-TITLE" \

-e INPUT_APP_LOCATION="." \

-e INPUT_API_LOCATION="./api" \

-v ${pwd}:/working_dir \

mcr.microsoft.com/appsvc/staticappsclient:stable \

./bin/staticsites/StaticSitesClient upload

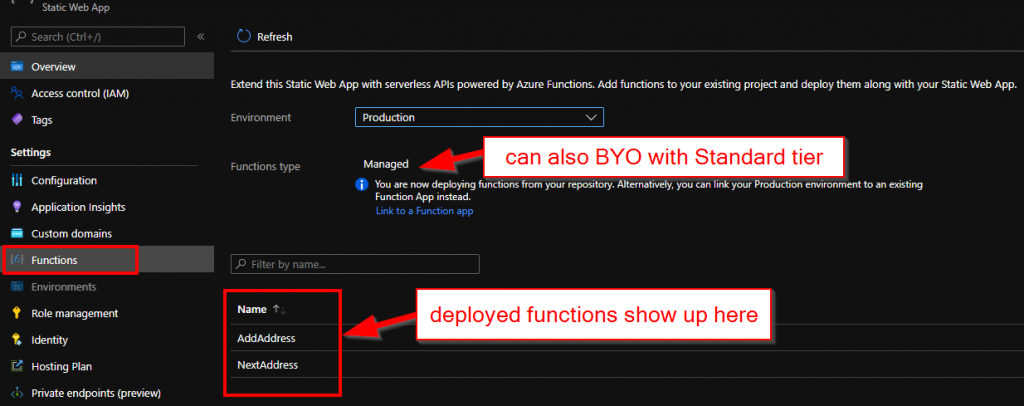

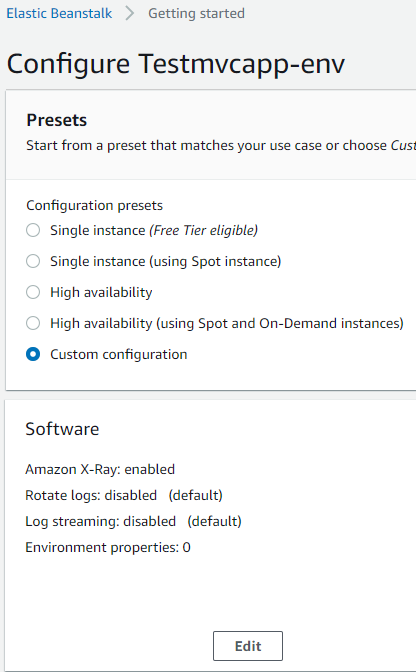

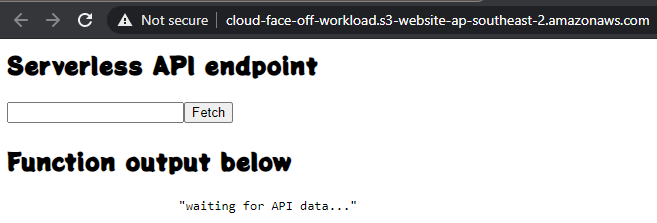

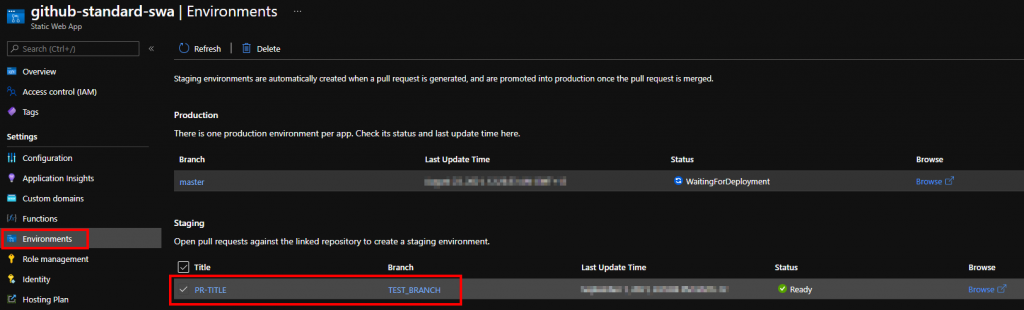

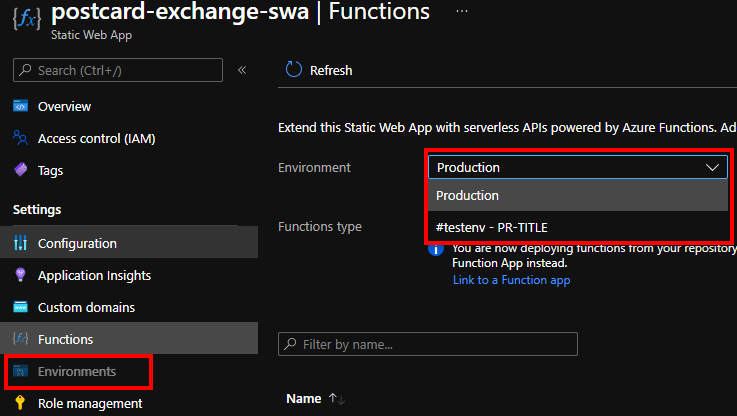

Also notice how this deployment created a staging environment:

Word of caution

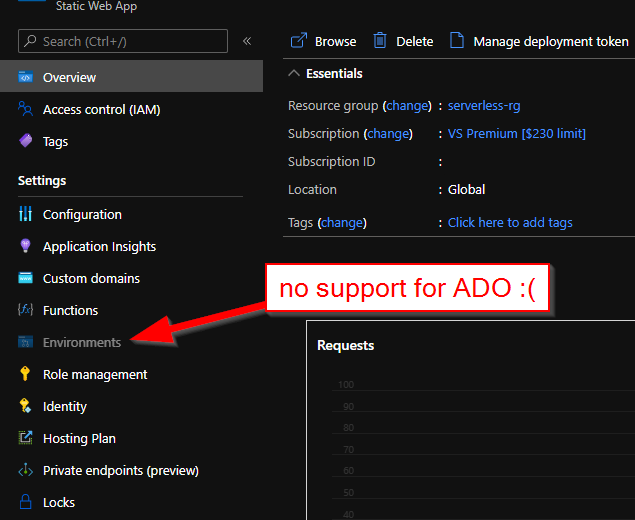

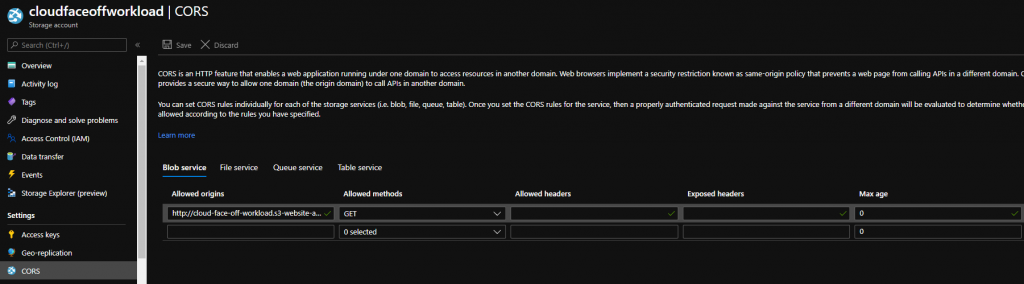

Even though it seems like a pretty good little hack – this is not supported. The Portal would also bug out and refuse to display Environments correctly if the resource were created with “Other” workflow:

Conclusion

Diving deep into Static Web Apps deployment is lots of fun. It may also help in situations where external source control is not available. For real production workloads, however, we’d recommend sticking with GitHub flow.