There’s a quiet revolution happening in self-hosted software. Between Immich, Jellyfin, Home Assistant, Jitsi and a dozen others, you can build yourself quite a capable home or office setup without sending a single byte to the cloud. We’ve been running a stack of self-hosted services on our internal network for a while now — all neatly managed through Docker and Traefik as our reverse weapon of choice.

Everything was humming along nicely until one day we tried to set up Jitsi Meet for internal video calls. The web UI loaded fine, but the moment we tried to join a call — nothing. No camera, no microphone. Just a cryptic error about getUserMedia being undefined.

Browsers and their trust issues

Turns out, modern browsers flat out refuse to give web apps access to certain APIs unless the page is served over HTTPS. This isn’t some obscure edge case either — it’s a long list:

- Camera & Microphone (

getUserMedia) — the one that bit us - Service Workers — so no PWA features or push notifications

- Geolocation API

- Clipboard API

- Web Bluetooth / USB / NFC

They call these “secure context” requirements, and there’s no way around them. Chrome, Firefox, Safari — they all enforce it. Localhost gets a pass, but anything on LAN at http://192.168.x.x or a local hostname does not.

The old way: certbot and HTTP-01

Normally you’d chuck certbot into the mix and call it a day. We’ve done that before for public-facing services. But HTTP-01 validation needs the ACME server to reach your host over port 80 from the internet. For internal services that’s a non-starter — we’d have to punch a hole in the firewall and expose an endpoint just to prove we own a domain. Always undesirable, always scary.

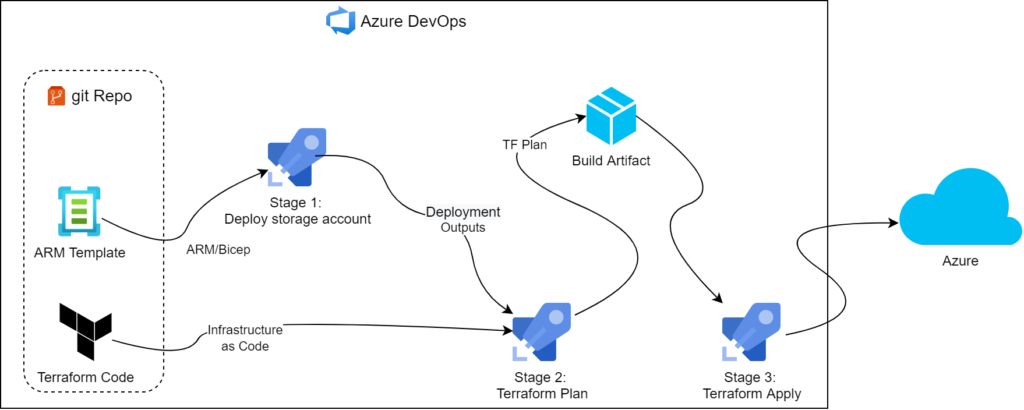

DNS-01 changes everything

There’s a DNS-01 validation method that we’d known about for years but always written off as “that complicated thing that needs programmable DNS.” You had to be on Azure DNS, Route53, or Cloudflare — not your average registrar nameservers.

But then one day our annual domain renewal bill came in at a price that warranted churning providers. Since we were moving anyway, it made sense to park the zones in Azure DNS. And suddenly DNS-01 was on the table.

The interesting thing about DNS-01 is that it requires zero external exposure. The ACME server validates ownership by checking a TXT record — no inbound connections needed. And since Traefik supports it natively, we can get wildcard certs for *.yourdomain.co.nz that cover every internal service automagically.

The Traefik stack

Here’s our Traefik setup with DNS-01 via Azure DNS:

version: "3.3"

services:

traefik:

image: traefik:mimolette

container_name: traefik

restart: unless-stopped

command:

- "--entrypoints.web.address=:80"

- "--entrypoints.websecure.address=:443"

- "--entrypoints.websecure.http.tls.certResolver=le"

- "--entrypoints.websecure.http.tls.domains[0].main=yourdomain.co.nz"

- "--entrypoints.websecure.http.tls.domains[0].sans=*.yourdomain.co.nz"

- "--providers.docker=true"

- "--providers.docker.exposedbydefault=true"

- "--certificatesresolvers.le.acme.dnschallenge=true"

- "--certificatesresolvers.le.acme.dnschallenge.provider=azuredns"

- "--certificatesresolvers.le.acme.email=admin@yourdomain.co.nz"

- "--certificatesresolvers.le.acme.storage=/letsencrypt/acme.json"

ports:

- "80:80"

- "443:443"

environment:

- "AZURE_SUBSCRIPTION_ID=your-subscription-id"

- "AZURE_RESOURCE_GROUP=dns-rg"

- "AZURE_CLIENT_ID=your-client-id"

- "AZURE_TENANT_ID=your-tenant-id"

- "AZURE_CLIENT_SECRET=your-client-secret"

- "AZURE_DNS_ZONE=yourdomain.co.nz"

volumes:

- "./traefik_data:/letsencrypt"

- "/var/run/docker.sock:/var/run/docker.sock:ro"

networks:

- default

- jitsi_default

networks:

default:

jitsi_default:

external: trueThe magic is in the entrypoints config — by setting certResolver=le and specifying the domain with a wildcard SAN at the entrypoint level, every service that Traefik picks up automatically gets a valid cert. No per-service certificate config needed.

There’s a nice security bonus here too. Since our DNS zone is public, you might worry about advertising all your internal service hostnames as individual A or CNAME records for the world to see. But with a wildcard cert we only need a single *.yourdomain.co.nz DNS record pointing at Traefik’s local IP — yes, a public DNS record pointing at 192.168.1.x. It’s perfectly valid, and it means nobody on the outside can enumerate your internal services from DNS. Traefik handles the routing based on the Host header, so the individual service names never appear in your zone file.

Adding Jitsi to the mix

With Traefik handling TLS, the Jitsi stack just needs to serve plain HTTP and let Traefik do the rest:

version: "3.3"

services:

web:

image: jitsi/web:stable

restart: unless-stopped

environment:

- PUBLIC_URL=https://meet.yourdomain.co.nz

- DISABLE_HTTPS=1

- ENABLE_LOBBY=1

labels:

- "traefik.http.routers.jitsi.rule=Host(`meet.yourdomain.co.nz`)"

- "traefik.http.services.jitsi.loadbalancer.server.port=80"

networks:

- default

# ... more stuff here ...The key bits: DISABLE_HTTPS=1 tells Jitsi’s web container to not bother with its own certs, and the Traefik labels on the web service are all it takes to wire it up. The JVB (video bridge) still needs its UDP port exposed directly since WebRTC media doesn’t go through the reverse proxy.

Fire up both stacks, point meet.yourdomain.co.nz at your Docker host in local DNS, and you’ve got Jitsi with a proper green padlock. Camera and microphone work without a hitch.

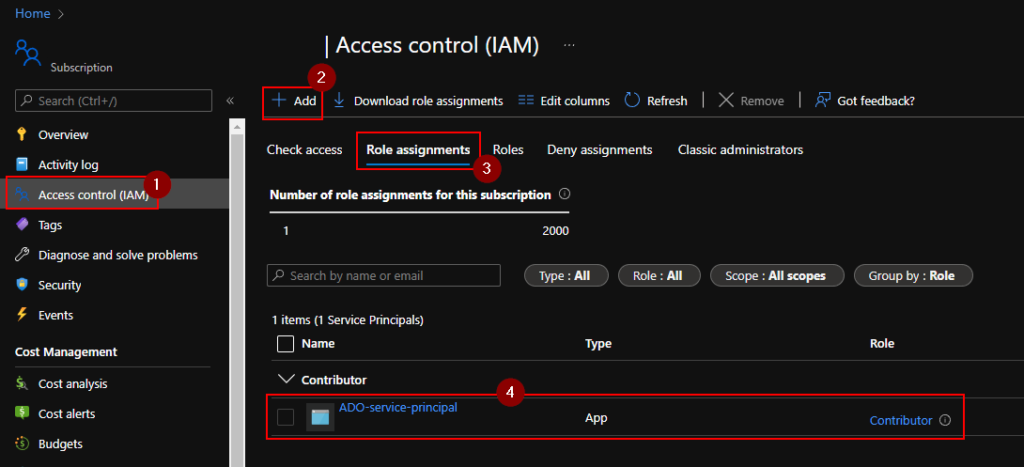

Setting up the Azure service principal

The one remaining piece is giving Traefik permission to create those DNS TXT records. We need an Azure service principal scoped to just the DNS zone:

# Create a service principal with DNS Zone Contributor role

SUBSCRIPTION_ID="your-subscription-id"

RESOURCE_GROUP="dns-rg"

DNS_ZONE="yourdomain.co.nz"

SCOPE="/subscriptions/$SUBSCRIPTION_ID/resourceGroups/$RESOURCE_GROUP/providers/Microsoft.Network/dnszones/$DNS_ZONE"

az ad sp create-for-rbac \

--name "traefik-acme" \

--role "DNS Zone Contributor" \

--scopes "$SCOPE"

# Output will include appId, password, and tenant — plug those into

# AZURE_CLIENT_ID, AZURE_CLIENT_SECRET, and AZURE_TENANT_ID respectivelyJust remember the client secret expires (default is one year), so set yourself a calendar reminder or you’ll be debugging cert renewals in 12 months wondering what went wrong.