Last time we took a peek under the hood of Static Web Apps, we discovered a docker container that allowed us to do custom deployments. This however left us with an issue where we could create staging environments but could not quite call it a day as we could not cleanup after ourselves.

There is more to custom deployments

Further inspection of GitHub actions config revealed there’s one more action that we could potentially exploit to get full advantage of custom workflows. It is called “close”:

name: Azure Static Web Apps CI/CD

....

jobs:

close_pull_request_job:

... bunch of conditions here

action: "close" # that is our hint!With the above in mind, we can make an educated guess on how to invoke it with docker:

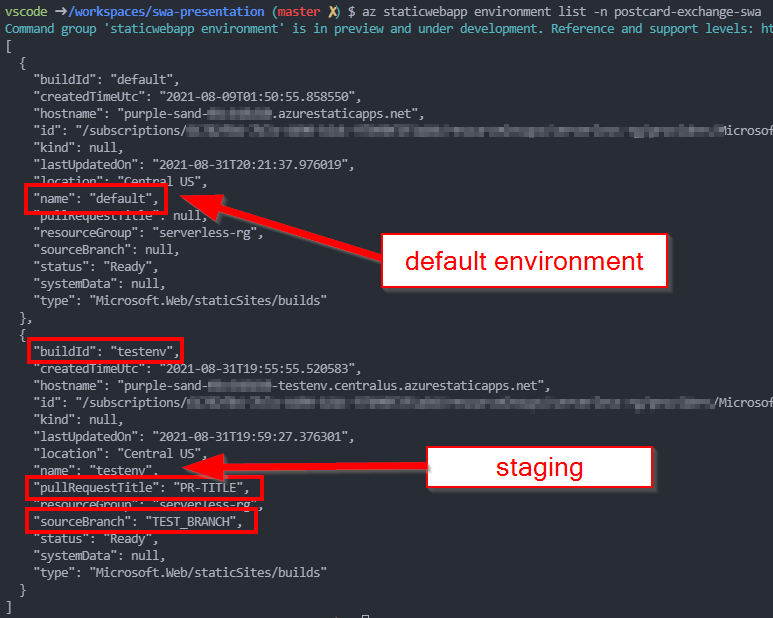

docker run -it --rm \

-e INPUT_AZURE_STATIC_WEB_APPS_API_TOKEN=<your deployment token> \

-e DEPLOYMENT_PROVIDER=DevOps \

-e GITHUB_WORKSPACE="/working_dir" \

-e IS_PULL_REQUEST=true \

-e BRANCH="TEST_BRANCH" \

-e ENVIRONMENT_NAME="TESTENV" \

-e PULL_REQUEST_TITLE="PR-TITLE" \

mcr.microsoft.com/appsvc/staticappsclient:stable \

./bin/staticsites/StaticSitesClient close --verboseRunning this indeed closes off an environment. That’s it!

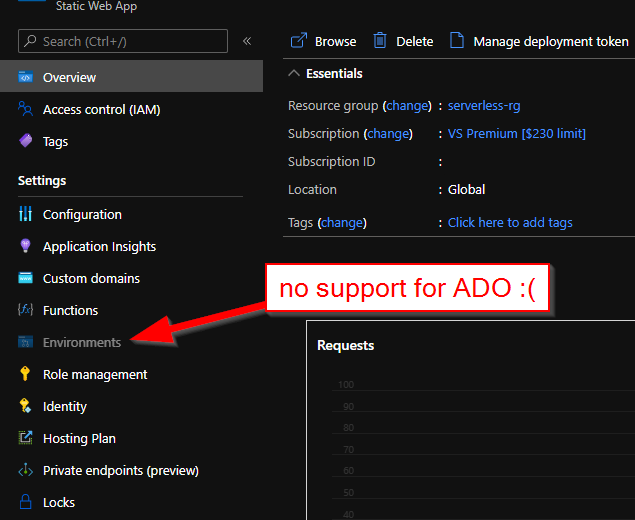

Can we build an ADO pipeline though?

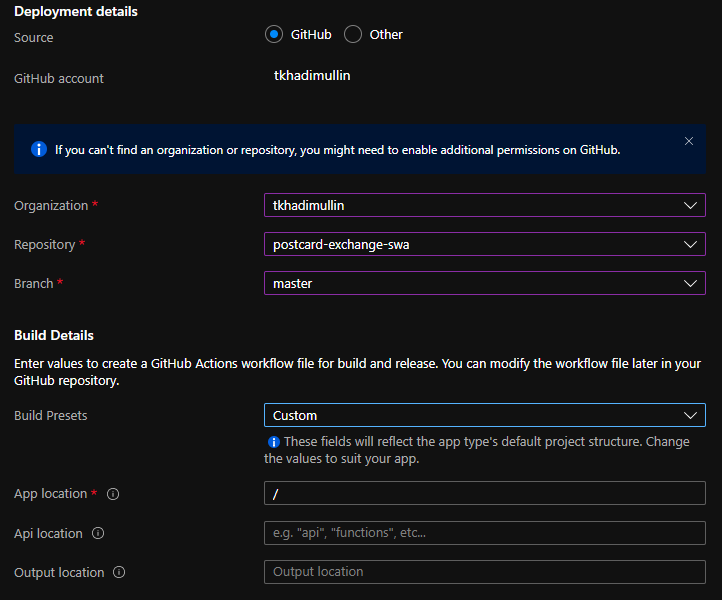

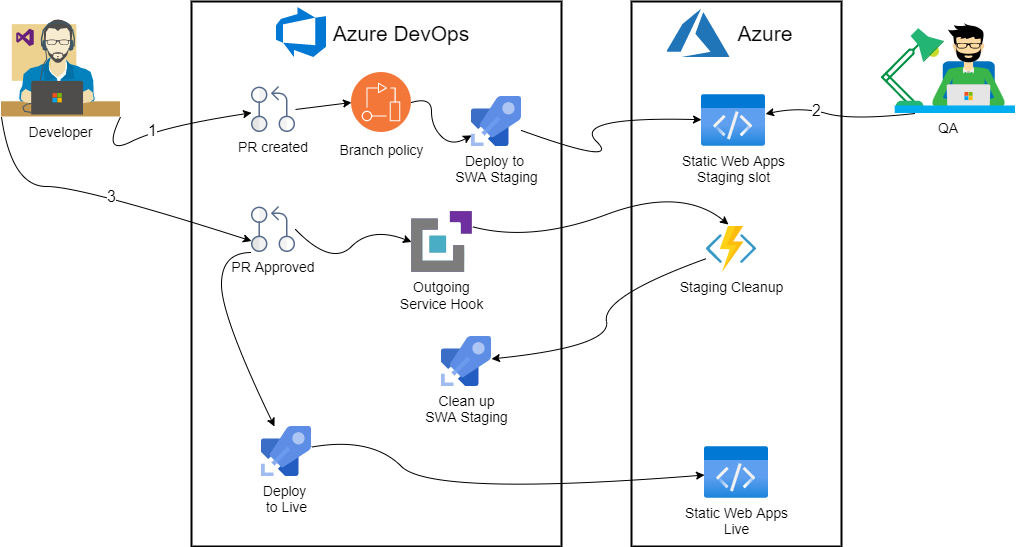

Just running docker containers is not really that useful as these actions are intended for CI/CD pipelines. Unfortunately, there’s no single config file we can edit to achieve it with Azure DevOps: we’d have to take a bit more hands on approach. Roughly the solution looks like so:

First, we’ll create a branch policy to kick off deployment to staging environment. Then we’ll use Service Hook to trigger an Azure Function on successful PR merge. Finally, stock standard Static Web Apps task will run on master branch when new commit gets pushed.

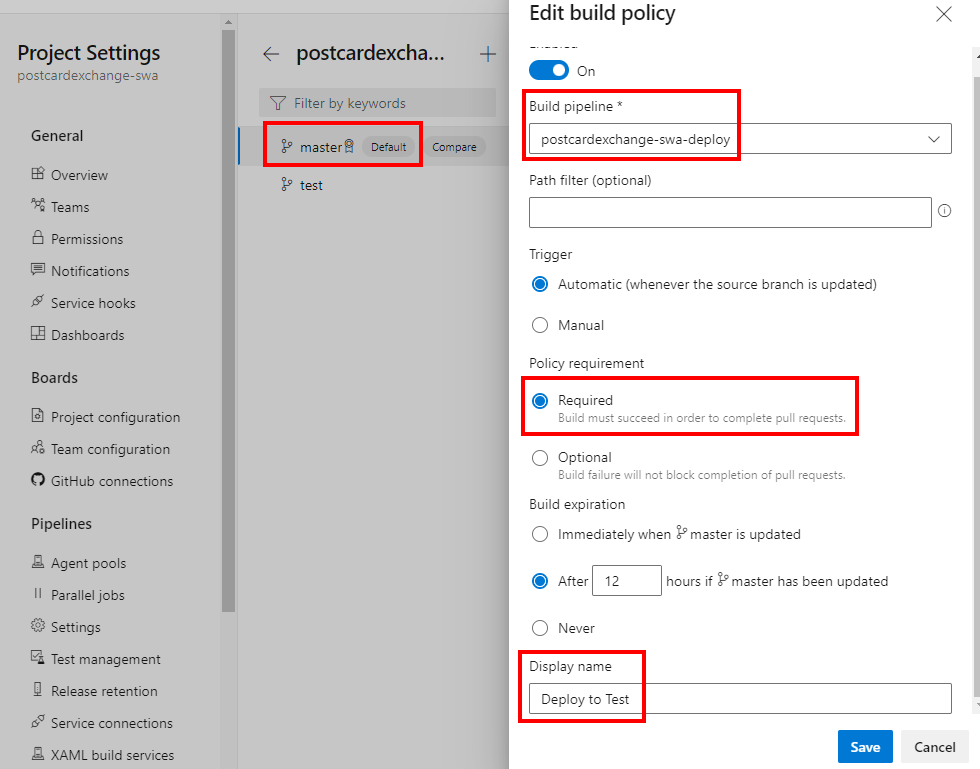

Branch policy

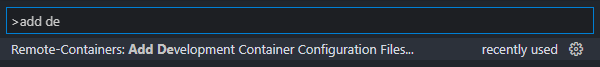

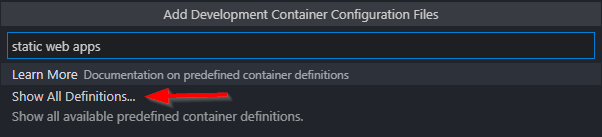

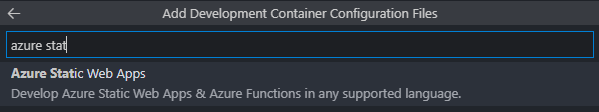

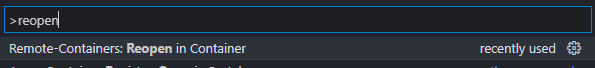

Creating branch policy itself is very straightforward: first we’ll need a separate pipeline definition:

pr:

- master

pool:

vmImage: ubuntu-latest

steps:

- checkout: self

- bash: |

docker run \

--rm \

-e INPUT_AZURE_STATIC_WEB_APPS_API_TOKEN=$(deployment_token) \

-e DEPLOYMENT_PROVIDER=DevOps \

-e GITHUB_WORKSPACE="/working_dir" \

-e IS_PULL_REQUEST=true \

-e BRANCH=$(System.PullRequest.SourceBranch) \

-e ENVIRONMENT_NAME="TESTENV" \

-e PULL_REQUEST_TITLE="PR # $(System.PullRequest.PullRequestId)" \

-e INPUT_APP_LOCATION="." \

-e INPUT_API_LOCATION="./api" \

-v ${PWD}:/working_dir \

mcr.microsoft.com/appsvc/staticappsclient:stable \

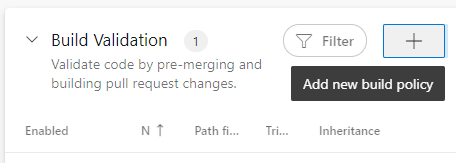

./bin/staticsites/StaticSitesClient uploadIn here we use a PR trigger, along with some variables to push through to Azure Static Web Apps. Apart from that, it’s a simple docker run that we have already had success with. To hook it up, we need a Build Validation check that would trigger this pipeline:

Teardown pipeline definition

Second part is a bit more complicated and requires an Azure Function to pull off. Let’s start by defining a pipeline that our function will run:

trigger: none

pool:

vmImage: ubuntu-latest

steps:

- script: |

docker run --rm \

-e INPUT_AZURE_STATIC_WEB_APPS_API_TOKEN=$(deployment_token) \

-e DEPLOYMENT_PROVIDER=DevOps \

-e GITHUB_WORKSPACE="/working_dir" \

-e IS_PULL_REQUEST=true \

-e BRANCH=$(PullRequest_SourceBranch) \

-e ENVIRONMENT_NAME="TESTENV" \

-e PULL_REQUEST_TITLE="PR # $(PullRequest_PullRequestId)" \

mcr.microsoft.com/appsvc/staticappsclient:stable \

./bin/staticsites/StaticSitesClient close --verbose

displayName: 'Cleanup staging environment'One thing to note here is manual trigger – we opt out of CI/CD. Then, we make note of environment variables that our function will have to populate.

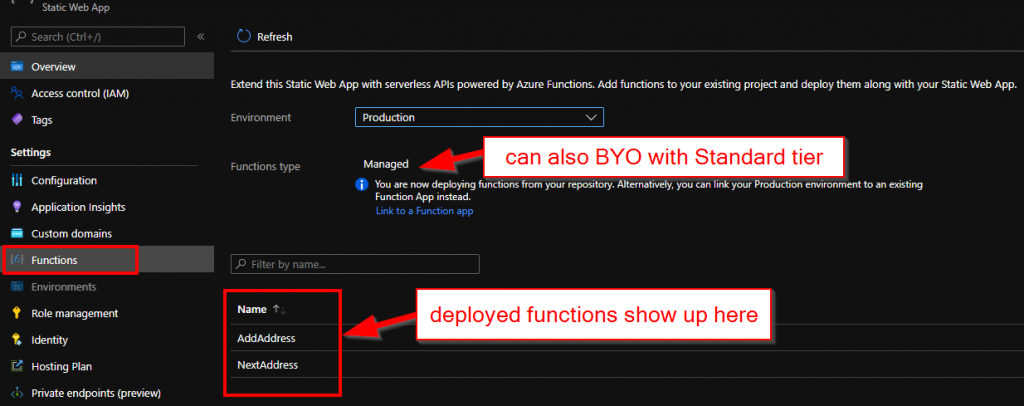

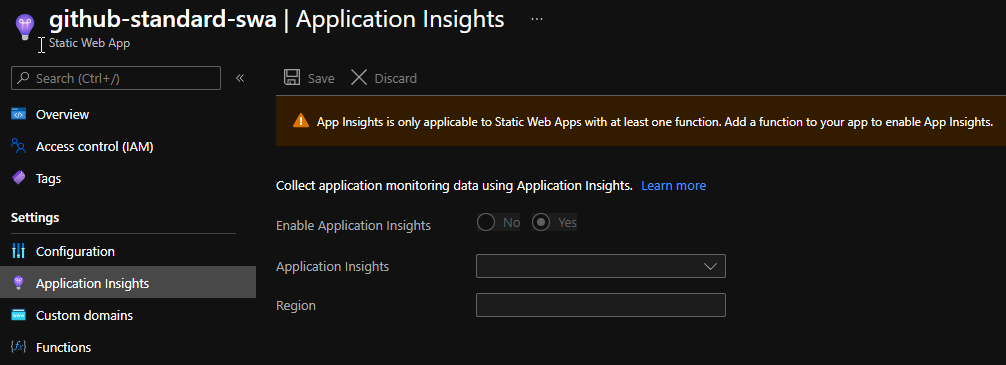

Azure Function

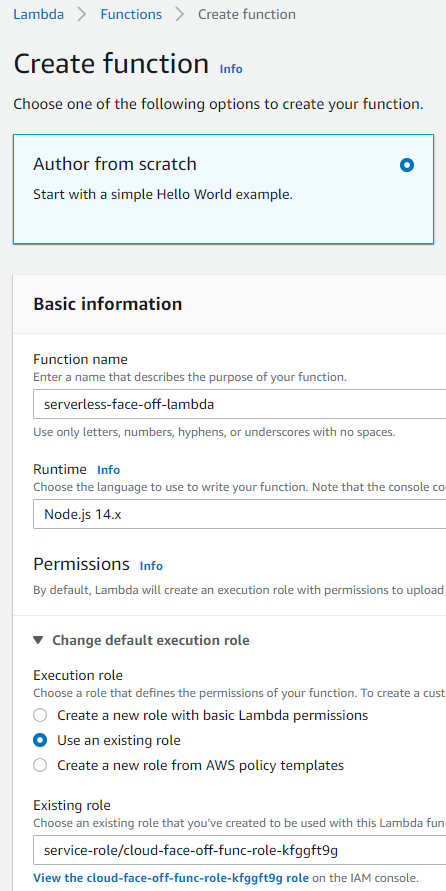

It really doesn’t matter what sort of function we create. In this case we opt for C# code that we can author straight from the Portal for simplicity. We also need to generate a PAT so our function can call ADO.

#r "Newtonsoft.Json"

using System.Net;

using System.Net.Http.Headers;

using System.Text;

using Microsoft.AspNetCore.Mvc;

using Microsoft.Extensions.Primitives;

using Newtonsoft.Json;

private const string personalaccesstoken = "<your PAT>";

private const string organization = "<your org>";

private const string project = "<your project>";

private const int pipelineId = <your pipeline Id>;

public static async Task<IActionResult> Run([FromBody]HttpRequest req, ILogger log)

{

log.LogInformation("C# HTTP trigger function processed a request.");

string requestBody = await new StreamReader(req.Body).ReadToEndAsync();

dynamic data = JsonConvert.DeserializeObject(requestBody);

log.LogInformation($"eventType: {data?.eventType}");

log.LogInformation($"message text: {data?.message?.text}");

log.LogInformation($"pullRequestId: {data?.resource?.pullRequestId}");

log.LogInformation($"sourceRefName: {data?.resource?.sourceRefName}");

try

{

using (HttpClient client = new HttpClient())

{

client.DefaultRequestHeaders.Accept.Add(new System.Net.Http.Headers.MediaTypeWithQualityHeaderValue("application/json"));

client.DefaultRequestHeaders.Authorization = new AuthenticationHeaderValue("Basic", ToBase64(personalaccesstoken));

string payload = @"{

""variables"": {

""System.PullRequest.SourceBranch"": {

""isSecret"": false,

""value"": """ + data?.resource?.sourceRefName + @"""

},

""System.PullRequest.PullRequestId"": {

""isSecret"": false,

""value"": "+ data?.resource?.pullRequestId + @"

}

}

}";

var url = $"https://dev.azure.com/{organization}/{project}/_apis/pipelines/{pipelineId}/runs?api-version=6.0-preview.1";

log.LogInformation($"sending payload: {payload}");

log.LogInformation($"api url: {url}");

using (HttpResponseMessage response = await client.PostAsync(url, new StringContent(payload, Encoding.UTF8, "application/json")))

{

response.EnsureSuccessStatusCode();

string responseBody = await response.Content.ReadAsStringAsync();

return new OkObjectResult(responseBody);

}

}

}

catch (Exception ex)

{

log.LogError("Error running pipeline", ex.Message);

return new JsonResult(ex) { StatusCode = 500 };

}

}

private static string ToBase64(string input)

{

return Convert.ToBase64String(System.Text.ASCIIEncoding.ASCII.GetBytes(string.Format("{0}:{1}", "", input)));

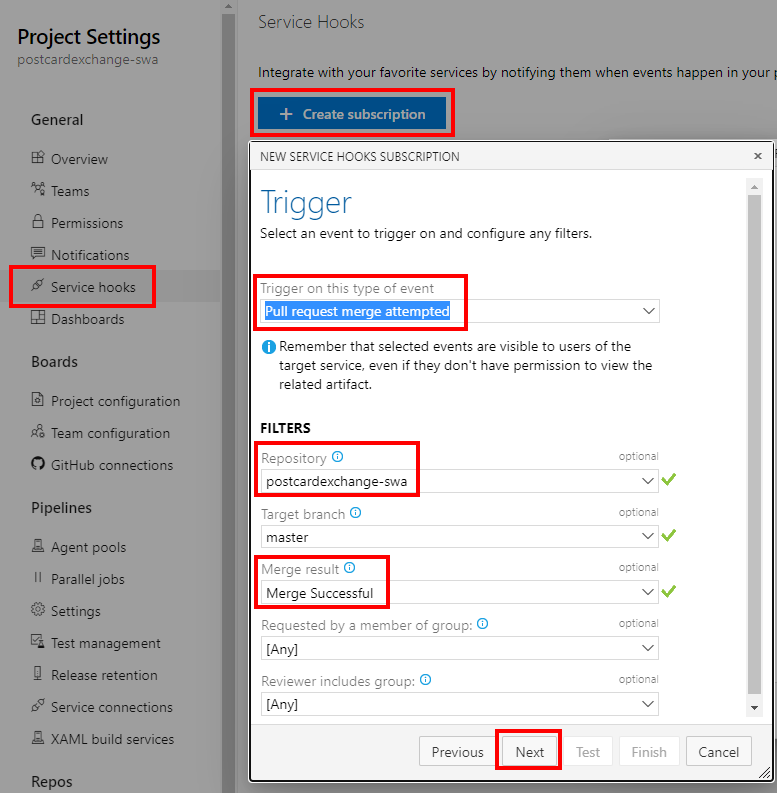

}Service Hook

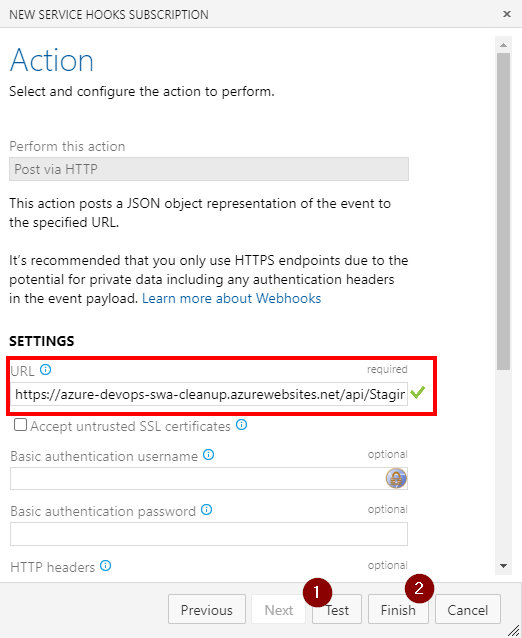

With all prep work done, all we have left to do is to connect PR merge event to Function call:

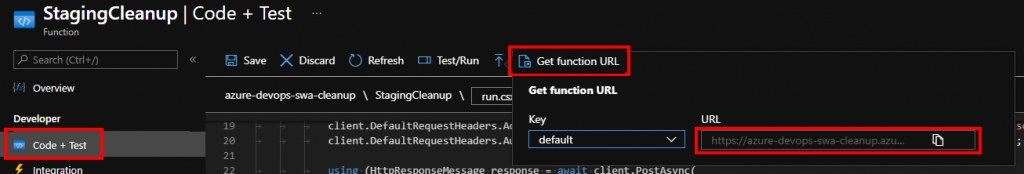

The function url should contain access key if that was defined. The easiest is probably to copy it straight from the Portal’s Code + Test blade:

It also may be a good idea to test connection on the second form before finishing up.

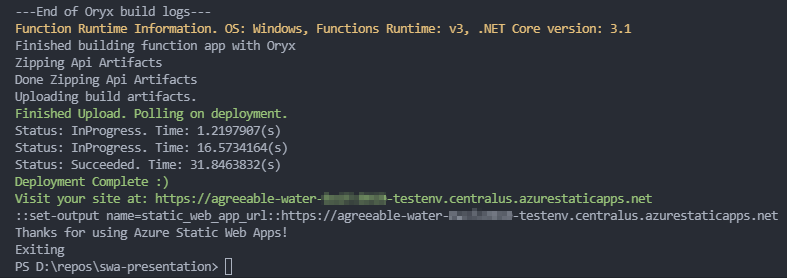

Conclusion

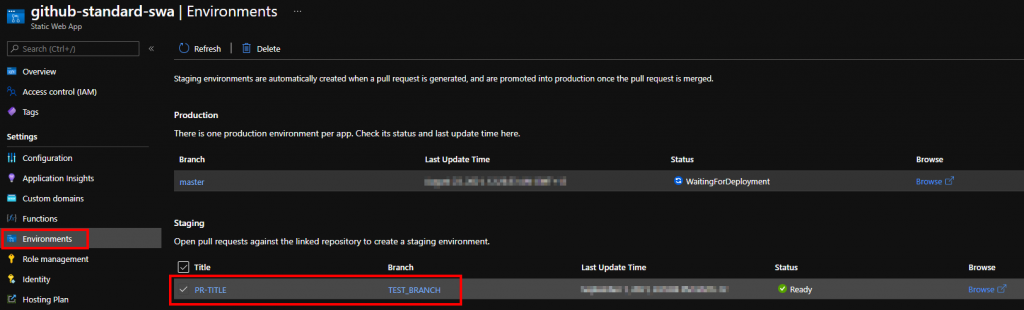

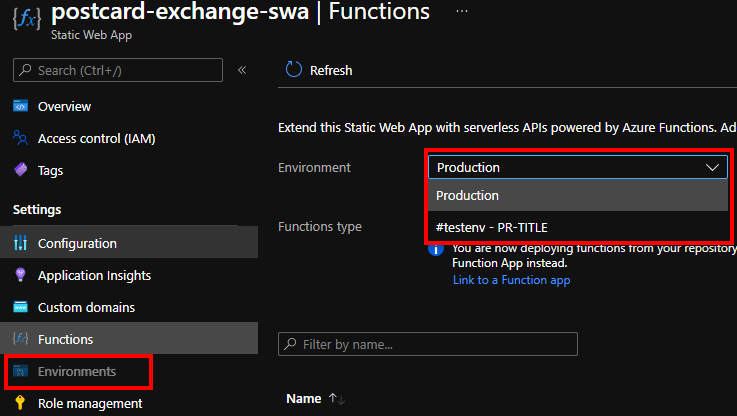

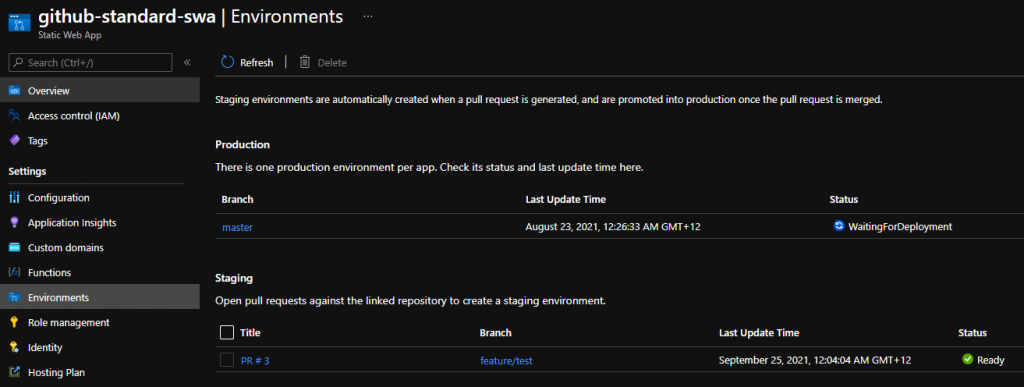

Once everything is connected, the pipelines should create/delete staging environments similar to what GitHub does. One possible improvement we could potentially do, would be to replace branch policy with yet another Service Hook to Function so that PR title gets correctly reflected on the Portal.

But I’ll leave it as a challenge for readers to complete.